ADR UX — Autonomous Delivery Robotics

From a super high level, the goal is straightforward — as clear as a straight line between two dots. A robot takes a package, delivers it to the customer. On time, safely. At the end of the day, that's all we want and all we need.

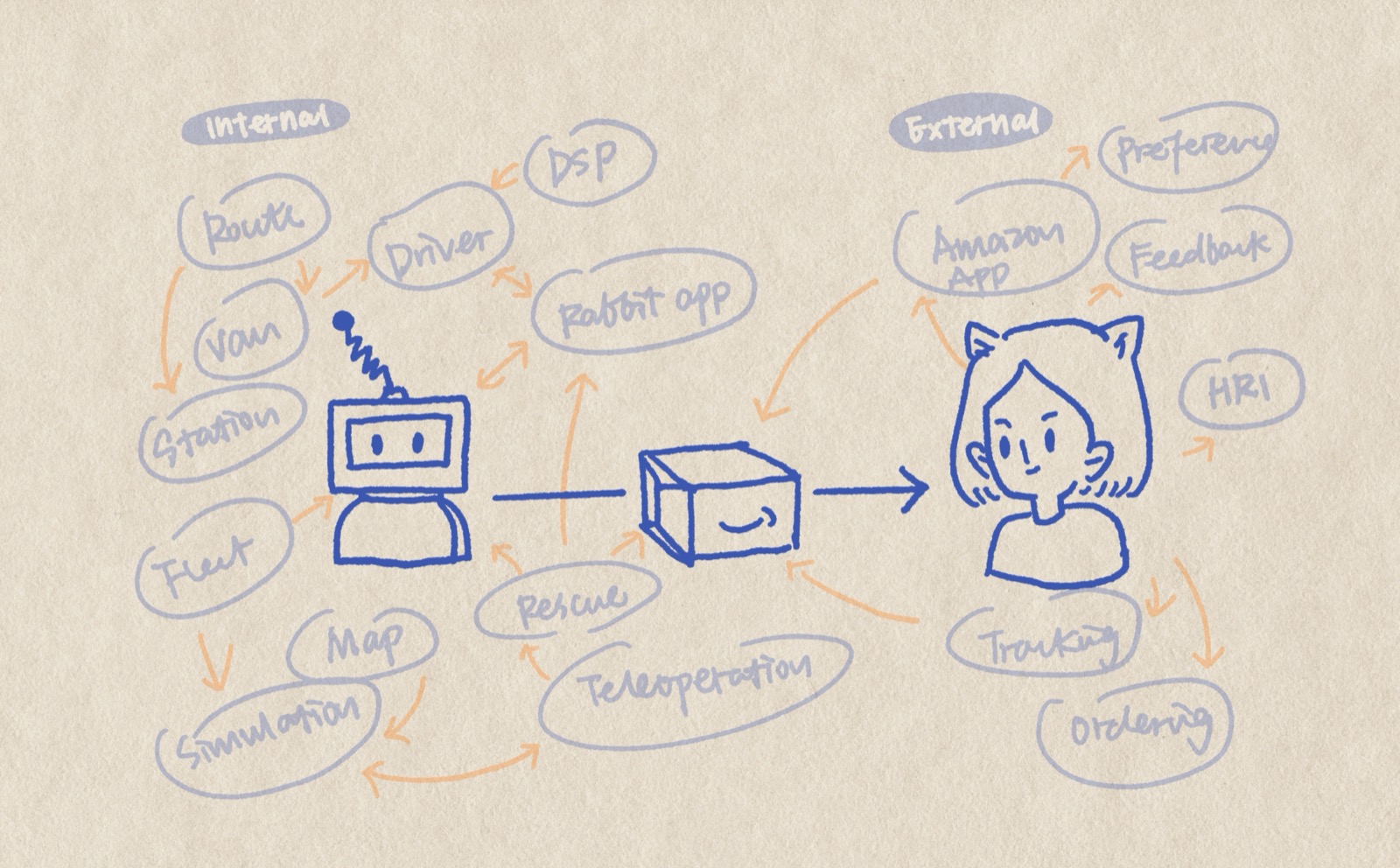

But to enable a goal this "simple," there's a complex underlying system that makes things feel seamless — as easy as connecting two dots — to the end customer.

From internal teams to external customers. Supporting systems like fleet management, simulation, teleoperation, mapping, and rescue. The on-the-road journey with drivers, DSPs, and the Rabbit app. The pre- and post-purchase customer experience through the Amazon app — ordering, tracking, preferences, feedback.

Delivery robotics, as a new layer, needs to be integrated across all of these existing layers to enable the best experience for end customers. And all of these underlying layers, plus the robot interaction itself, are my responsibilities.

I'm the first and only UX designer across the entire ADR ecosystem. That means I'm not inheriting a design direction or building on someone else's research — I'm establishing what UX means for this product from scratch, while the product itself is being built.

I assess new initiatives before they solidify, flagging structural issues and interaction gaps while decisions are still cheap to change. Across teams, I coordinate with stakeholders and UX designers from different parts of the last-mile system — because the robot touches delivery workflows, driver tools, and customer-facing status, and those seams between systems are where the experience breaks or holds.

I pitched the engineering team on handing off React prototypes instead of static Figma screens. They were open to experimenting together — both sides exploring and refining the process as we went.

The workflow: I started with lo-fi interactive prototypes to walk through key user flows, identify high-level object models, and align on direction. Then I handed off React prototypes for the flows we identified, built with real design system components. The engineering team was able to push the code package I shared into their codebase and connect it to their backend with minimal tweaks — saving significant front-end development time.

On the research side, I'm exploring human-robot interaction for next-generation delivery robots. How should a person on a sidewalk understand what the robot intends to do? How do remote operators build calibrated trust — enough to let the system work, not so much that they miss failures?

These questions sit at the intersection I keep returning to: physical operations, AI, and trust. Interfaces where decisions have consequences beyond the screen.